Neural Network from Scratch | TensorFlow for Hackers (Part IV)

— Deep Learning, Neural Networks, TensorFlow, Python — 5 min read

Share

Developing models using TensorFlow is easy and fun, but real understanding can be achieved only via reading and implementing the algorithms on your own. This time we will skip TensorFlow entirely and build a Neural Network (shallow one) from scratch, using only pure Python and NumPy. The real challenge is to implement the core algorithm that is used to train (Deep) Neural Networks - Backpropagation. Shall we dance?

Setup

Let’s begin by preparing our environment and seeding the random number generator properly:

1import numpy as np2import matplotlib.pyplot as plt3import seaborn as sns4from pylab import rcParams56from preprocessing import *7from math_utils import *8from plotting import *910%matplotlib inline1112sns.set(style='whitegrid', palette='muted', font_scale=1.5)1314rcParams['figure.figsize'] = 12, 61516RANDOM_SEED = 421718np.random.seed(RANDOM_SEED)We are importing 3 custom modules that contain some helper functions that we are going to use along the way!

Background

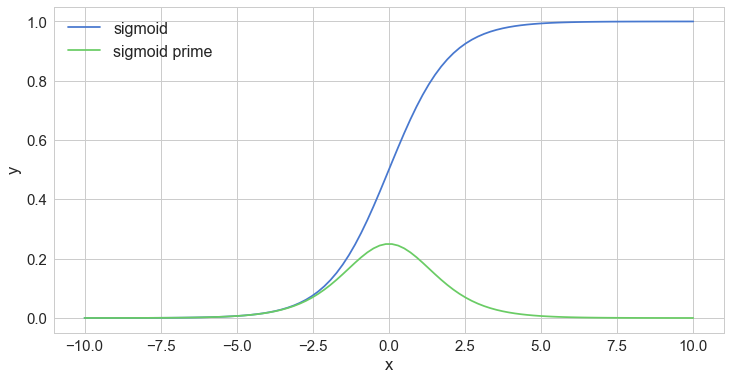

Sigmoid (and it’s derivative)

The sigmoid function is used quite commonly in the realm of deep learning, at least it was until recently. It has distinct S shape and it is a differentiable real function for any real input value. Additionally, it has a positive derivative at each point. More importantly, we will use it as an activation function for the hidden layer of our model. Here’s how it is defined:

σ(x)=1+e−x1It’s first derivative (which we will use during the backpropagation step of our training algorithm) has the following formula:

d(x)dσ(x)=σ(x)⋅(1−σ(x))So, the derivative can be expressed using the original sigmoid function. Pretty cool, eh? Don’t like formulas? Let’s look at a picture:

1x = np.linspace(-10., 10., num=100)2sig = sigmoid(x)3sig_prime = sigmoid_prime(x)45plt.plot(x, sig, label="sigmoid")6plt.plot(x, sig_prime, label="sigmoid prime")7plt.xlabel("x")8plt.ylabel("y")9plt.legend(prop={'size' : 16})10plt.show()

The derivative shows us the rate of change of a function. We can use it to determine the “slope” of that function. The highest rate of change for the sigmoid function is when x=0, as it is evident from the derivative graph (in green).

If your Calculus feels a bit rusty take a look at this worked example. That should get you there.

Softmax

The softmax function can be easily differentiated, it is pure (output depends only on input) and the elements of the resulting vector sum to 1. Here it is:

σ(z)j=∑Kk=1ezkezjforj=1,...,kIn probability theory, the output of the softmax function is sometimes used as a representation of a categorical distribution. Let’s see an example result:

1softmax(np.array([[2, 4, 6, 8]]))1array([[ 0.00214401, 0.0158422 , 0.11705891, 0.86495488]])The output has most of its weight corresponding to the input 8. The softmax function highlights the largest value(s) and suppresses the smaller ones.

NumPy

We will use NumPy primarily for its Linear Algebra magic when working with matrices. Let’s define 2 matrices:

1m1 = np.array([[1, 2, 3], [2, 3, 4]])2m2 = np.array([[3, 2, 1], [4, 3, 2]])34m11array([[1, 2, 3],2 [2, 3, 4]])See the dimensions of the first matrix (rows, columns):

1m1.shape1(2, 3)Transpose the second matrix:

1m2.T1array([[3, 4],2 [2, 3],3 [1, 2]])And see its dimensions:

1m2.T.shape1(3, 2)Find the dot product of the matrices:

1m1.dot(m2.T)1array([[10, 16],2 [16, 25]])Finally, matrix multiplication:

1np.multiply(m1, m2)1array([[3, 4, 3],2 [8, 9, 8]])NumPy is pretty useful. You just have to be careful with the dimensions!

Backpropagation

Backpropagation is the backbone of almost anything we do when using Neural Networks. The algorithm consists of 3 subtasks:

- Make a forward pass

- Calculate the error

- Make backward pass (backpropagation)

In the first step, backprop uses the data and the weights of the network to compute a prediction. Next, the error is computed based on the prediction and the provided labels. The final step propagates the error through the network, starting from the final layer. Thus, the weights get updated based on the error, little by little.

Let’s build more intuition about what the algorithm is actually doing:

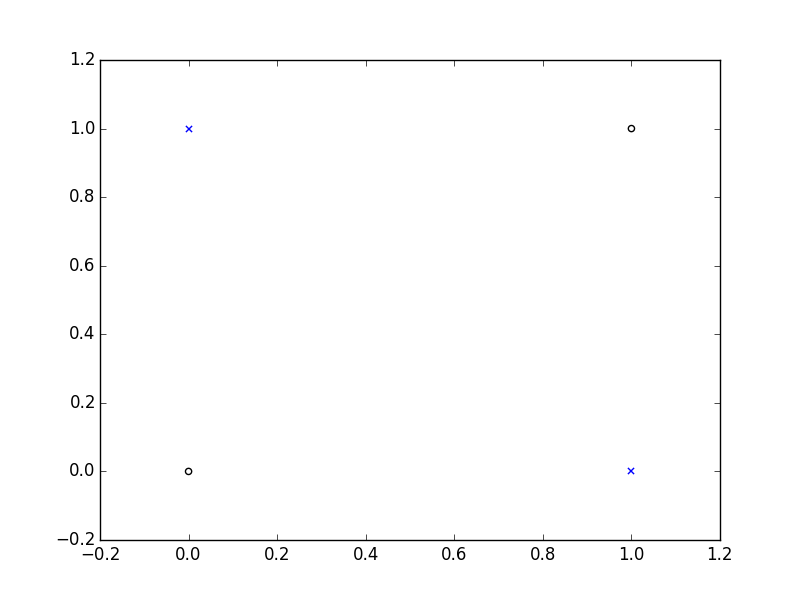

We will try to create a Neural Network (NN) that can properly predict values from the XOR function. Here is its truth table:

| Input 1 | Input 2 | Output |

|---|---|---|

| 0 | 0 | 0 |

| 0 | 1 | 1 |

| 1 | 0 | 1 |

| 1 | 1 | 0 |

Here is a visual representation:

Let start by defining some parameters:

1epochs = 500002input_size, hidden_size, output_size = 2, 3, 13LR = .1 # learning rateOur data looks like this:

1X = np.array([[0,0], [0,1], [1,0], [1,1]])2y = np.array([ [0], [1], [1], [0]])Initialize the weights of our NN to random numbers (using proper size):

1w_hidden = np.random.uniform(size=(input_size, hidden_size))2w_output = np.random.uniform(size=(hidden_size, output_size))Finally, implementation of the Backprop algorithm:

1for epoch in range(epochs):23 # Forward4 act_hidden = sigmoid(np.dot(X, w_hidden))5 output = np.dot(act_hidden, w_output)67 # Calculate error8 error = y - output910 if epoch % 5000 == 0:11 print(f'error sum {sum(error)}')1213 # Backward14 dZ = error * LR15 w_output += act_hidden.T.dot(dZ)16 dH = dZ.dot(w_output.T) * sigmoid_prime(act_hidden)17 w_hidden += X.T.dot(dH)1error sum [-1.77496016]2error sum [ 0.00586565]3error sum [ 0.00525699]4error sum [ 0.0003625]5error sum [-0.00064657]6error sum [ 0.00189532]7error sum [ 3.79101898e-08]8error sum [ 7.47615376e-13]9error sum [ 1.40960742e-14]10error sum [ 1.49842526e-14]That error seems to be decreasing! YaY! And the implementation is not that scary, isn’t it? We just multiply the matrix containing our training data with the matrix of the weights of the hidden layer. Then, we apply the activation function (sigmoid) to the result and multiply that with the weight matrix of the output layer.

The error is computed by doing simple subtraction. During the backpropagation step, we adjust the weight matrices using the already computed error and use the derivative of the sigmoid function.

Let’s try to predict using our trained model (doing just the forward step):

1X_test = X[1] # [0, 1]23act_hidden = sigmoid(np.dot(X_test, w_hidden))4np.dot(act_hidden, w_output)1array([ 1.])What is this sorcery? The prediction is correct! You can try some of the other input examples.

Building our own Neural Network Classifier

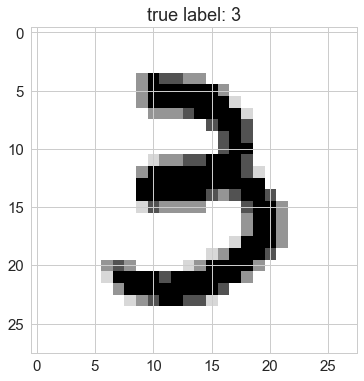

The “hello world” dataset MNIST (“Modified National Institute of Standards and Technology”), released in 1999, contains images of handwritten digits. Our goal is to build a model that correctly identify digits from a dataset of tens of thousands of handwritten digits.

We will build our own “vanilla” Neural Network classifier that learns from raw pixels using only Python and NumPy. Let’s start by reading the data:

Reading and shuffling the images

1IMAGES_PATH = 'train-images-idx3-ubyte'2LABELS_PATH = 'train-labels-idx1-ubyte'34N_FEATURES = 28 * 285N_CLASSES = 101X, y = read_mnist(IMAGES_PATH, LABELS_PATH)2X, y = shuffle_data(X, y, random_seed=RANDOM_SEED)3X_train, y_train = X[:500], y[:500]4X_test, y_test = X[500:], y[500:]We reserve 500 training examples for evaluation of our model.

Data exploration

Let’s take a look at how some handwritten digits look like:

1plot_digit(X, y, idx=1)

1plot_digit(X, y, idx=2)

1plot_digit(X, y, idx=3)

Implementing the model

Let’s define a class, called NNClassifier that does all the dirty work for us. We will implement a somewhat more sophisticated version of our training algorithm shown above along with some handy methods:

1class NNClassifier:23 def __init__(self, n_classes, n_features, n_hidden_units=30,4 l1=0.0, l2=0.0, epochs=500, learning_rate=0.01,5 n_batches=1, random_seed=None):67 if random_seed:8 np.random.seed(random_seed)9 self.n_classes = n_classes10 self.n_features = n_features11 self.n_hidden_units = n_hidden_units12 self.w1, self.w2 = self._init_weights()13 self.l1 = l114 self.l2 = l215 self.epochs = epochs16 self.learning_rate = learning_rate17 self.n_batches = n_batches1819 def _init_weights(self):20 w1 = np.random.uniform(-1.0, 1.0,21 size=self.n_hidden_units * (self.n_features + 1))22 w1 = w1.reshape(self.n_hidden_units, self.n_features + 1)23 w2 = np.random.uniform(-1.0, 1.0,24 size=self.n_classes * (self.n_hidden_units + 1))25 w2 = w2.reshape(self.n_classes, self.n_hidden_units + 1)26 return w1, w22728 def _add_bias_unit(self, X, how='column'):29 if how == 'column':30 X_new = np.ones((X.shape[0], X.shape[1] + 1))31 X_new[:, 1:] = X32 elif how == 'row':33 X_new = np.ones((X.shape[0] + 1, X.shape[1]))34 X_new[1:, :] = X35 return X_new3637 def _forward(self, X):38 net_input = self._add_bias_unit(X, how='column')39 net_hidden = self.w1.dot(net_input.T)40 act_hidden = sigmoid(net_hidden)41 act_hidden = self._add_bias_unit(act_hidden, how='row')42 net_out = self.w2.dot(act_hidden)43 act_out = sigmoid(net_out)44 return net_input, net_hidden, act_hidden, net_out, act_out4546 def _backward(self, net_input, net_hidden, act_hidden, act_out, y):47 sigma3 = act_out - y48 net_hidden = self._add_bias_unit(net_hidden, how='row')49 sigma2 = self.w2.T.dot(sigma3) * sigmoid_prime(net_hidden)50 sigma2 = sigma2[1:, :]51 grad1 = sigma2.dot(net_input)52 grad2 = sigma3.dot(act_hidden.T)53 return grad1, grad25455 def _error(self, y, output):56 L1_term = L1_reg(self.l1, self.w1, self.w2)57 L2_term = L2_reg(self.l2, self.w1, self.w2)58 error = cross_entropy(output, y) + L1_term + L2_term59 return 0.5 * np.mean(error)6061 def _backprop_step(self, X, y):62 net_input, net_hidden, act_hidden, net_out, act_out = self._forward(X)63 y = y.T6465 grad1, grad2 = self._backward(net_input, net_hidden, act_hidden, act_out, y)6667 # regularize68 grad1[:, 1:] += (self.w1[:, 1:] * (self.l1 + self.l2))69 grad2[:, 1:] += (self.w2[:, 1:] * (self.l1 + self.l2))7071 error = self._error(y, act_out)7273 return error, grad1, grad27475 def predict(self, X):76 Xt = X.copy()77 net_input, net_hidden, act_hidden, net_out, act_out = self._forward(Xt)78 return mle(net_out.T)7980 def predict_proba(self, X):81 Xt = X.copy()82 net_input, net_hidden, act_hidden, net_out, act_out = self._forward(Xt)83 return softmax(act_out.T)8485 def fit(self, X, y):86 self.error_ = []87 X_data, y_data = X.copy(), y.copy()88 y_data_enc = one_hot(y_data, self.n_classes)89 for i in range(self.epochs):9091 X_mb = np.array_split(X_data, self.n_batches)92 y_mb = np.array_split(y_data_enc, self.n_batches)9394 epoch_errors = []9596 for Xi, yi in zip(X_mb, y_mb):9798 # update weights99 error, grad1, grad2 = self._backprop_step(Xi, yi)100 epoch_errors.append(error)101 self.w1 -= (self.learning_rate * grad1)102 self.w2 -= (self.learning_rate * grad2)103 self.error_.append(np.mean(epoch_errors))104 return self105106 def score(self, X, y):107 y_hat = self.predict(X)108 return np.sum(y == y_hat, axis=0) / float(X.shape[0])All the magic is hidden within the _forward, _backward, _error and _backprop_step methods. We measure the error using cross-entropy loss function. Additionally, L1 and L2 regularizations are used to drive our training into simpler models. One preprocessing step that our model is doing internally is the encoding of the labels as one-hot vectors via the helper function - one_hot.

Our NN has a neat interface, too! Use the fit method to train it, predict to predict the class of a digit and score to assess the overall performance of the model.

Training

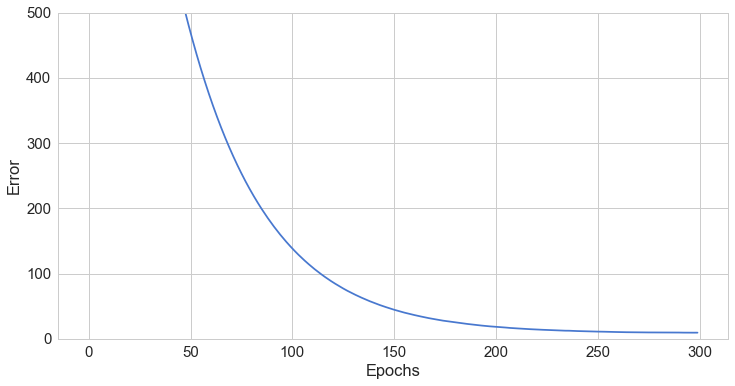

It’s time to reap the benefits of our hard work. Let’s train our NN for 300 epochs with 50 neurons in the hidden layer:

1nn = NNClassifier(n_classes=N_CLASSES,2 n_features=N_FEATURES,3 n_hidden_units=50,4 l2=0.5,5 l1=0.0,6 epochs=300,7 learning_rate=0.001,8 n_batches=25,9 random_seed=RANDOM_SEED)1011nn.fit(X_train, y_train);Evaluation

First, let’s have a look at the error change as the number of training epochs increase:

1plot_error(nn)

Good, it look like it is converging to a low value. More importantly, let’s check how good our model’s predictions are on the training and test sets:

1print('Train Accuracy: %.2f%%' % (nn.score(X_train, y_train) * 100))2print('Test Accuracy: %.2f%%' % (nn.score(X_test, y_test) * 100))1Train Accuracy: 91.80%2Test Accuracy: 81.65%Our test accuracy is not that good, especially when compared to the results obtained via other models. Let’s check the probability distribution for a single example:

1nn.predict_proba(X_test[1:2])1array([[ 0.09006643, 0.08982926, 0.09148965, 0.08801483, 0.08905539,2 0.09358783, 0.18462954, 0.08784268, 0.09758406, 0.08790033]])You can “clearly” see that the most probable digit is 6.

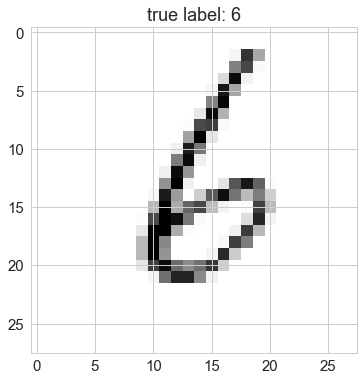

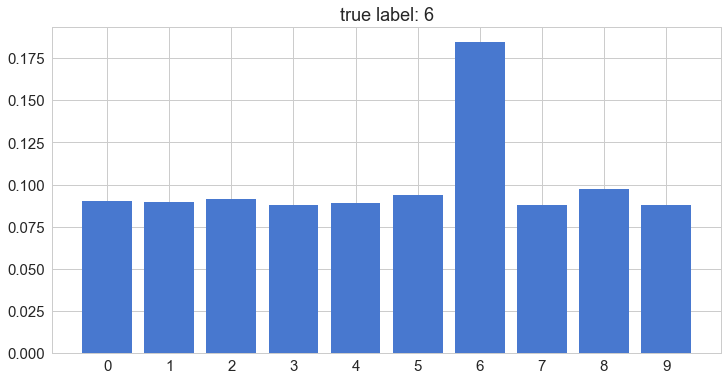

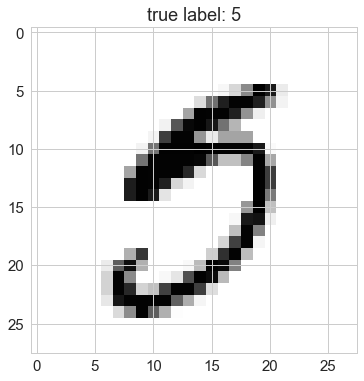

Correct prediction

Let’s look at the image itself:

1plot_digit(X_test, y_test, idx=1)

And the probability distribution:

1plot_digit_dist(X_test, y_test, idx=1, model=nn)

Our model looks quite sure about its prediction. Let’s have a look at a wrong prediction:

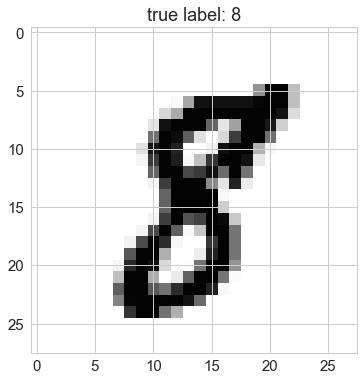

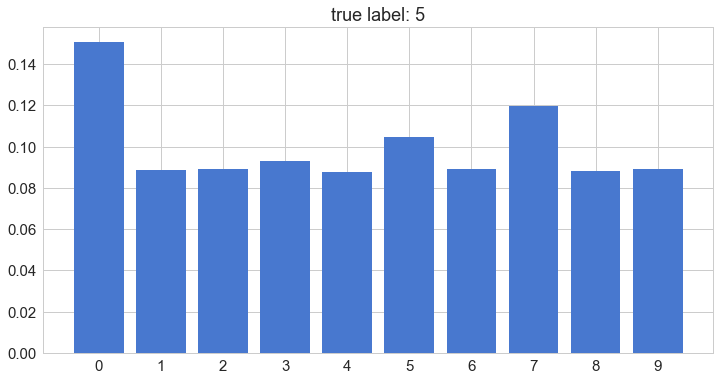

Wrong prediction

1plot_digit(X_test, y_test, idx=70)

1plot_digit_dist(X_test, y_test, idx=70, model=nn)

Come on, look at that picture. How is that a 5?

MLE = picking the most probable digit

Ok, but how does the prediction work? Simply put, it uses the most probable value in the class distribution:

1mle(nn.predict_proba(X_test[:5]))1array([1, 6, 1, 0, 5])1nn.predict(X_test[:5])1array([1, 6, 1, 0, 5])Trying a bit harder

The performance of our model was not that great. Can we improve on that?

Let’s try to scale our input data:

1from sklearn.preprocessing import scale, normalize23X_train_std = scale(X_train.astype(np.float64))4X_test_std = scale(X_test.astype(np.float64))56nn = NNClassifier(n_classes=N_CLASSES,7 n_features=N_FEATURES,8 n_hidden_units=50,9 l2=0.5,10 l1=0.0,11 epochs=300,12 learning_rate=0.001,13 n_batches=25,14 random_seed=RANDOM_SEED)1516nn.fit(X_train_std, y_train);1718print('Test Accuracy: %.2f%%' % (nn.score(X_test_std, y_test) * 100))1Test Accuracy: 84.80%Not bad, about 3% increase using simple preprocessing. What if we fiddle with the parameters a bit:

1nn = NNClassifier(n_classes=N_CLASSES,2 n_features=N_FEATURES,3 n_hidden_units=250,4 l2=0.5,5 l1=0.0,6 epochs=500,7 learning_rate=0.001,8 n_batches=25,9 random_seed=RANDOM_SEED)1011nn.fit(X_train, y_train);1213print('Test Accuracy: %.2f%%' % (nn.score(X_test, y_test) * 100))1Test Accuracy: 86.77%Another 2% increase. Now, we’re in the “acceptable” range. Will the combination of the two approaches yield an even better result? Why don’t you try it out?

Conclusion

What a journey, right? We’ve learned a lot about the inner workings of the Neural Network models. More importantly, we’ve implemented the backpropagation algorithm - twice! Hopefully, you got some practical understanding of the processes involved in training a Neural Network. Can you adapt the code and make a Deep Neural Network?

References

Share

Want to be a Machine Learning expert?

You'll never get spam from me